I don’t know who this guy is, but I’m with at least on this.

I don’t know the guy, but I do know the site, which is really nice for seeing how other games implemented UI. Makes complete sense for AI assholes to want all that data.

& they’ll get that data one way or the other

I drink your milkshake Elijah. I drink it up!

He’s a weird guy.

Makes a website sourcing screenshots from games for UI inspiration.

Sources UI art from Twitter with a bunch of contributors.

Has a boyfriend.

Active on Twitter, a site where you can “Heil Hitler” but you get blocked by saying Cis.

It seems we’ve come full circle with “copying is not theft”… I have to admit I’m really not against the technology in general, but the people who are currently in control of it are all just the absolute worst people who are the least deserving of control over such a thing.

Is it hypocritical to think there should be rules for corporations that dont apply to real people? Like why is it the other way around and I can go to jail or get a fine for sharing the wrong files but some company does it and they just say its for the “common good” and they “couldnt make money if they had to follow the laws” and they get a fucking pass?

Yeah, I’ve been a pirate for so long I have zero moral grounds to be against using copyrighted stuff for free…

Except I’m not burning a small nations’ worth of energy to download a NoFX album and I’m not recreating that album and selling it to people when they ask for a copy of Heavy Petting Zoo (I’m just giving them the real songs). So, moral high ground regained?

On the other hand, now I can download all the music I want because I’m training an AI. (Code still under development).

ITT: People who didn’t check the community name

I mean it does show up on the feed as normal and sometimes people feel like it’s fine to give an differing perspective to such communities.

To be fair, I thought I blocked this community…

Sure… And you just had to reply with that info.

Then there’s always that one guy who’s like “what about memes?”

MSpaint memes are waaaaay funnier than Ai memes, if only due to being a little bit ass.

Ass is the most important thing in a meme

EDIT: I feel like I could have written this better but I will stand by it nonetheless

Thank you for your service

Plus it let people with…let’s say no creative ability to speak of to be nice about it…make memes.

Anyone can have creative ability if they actually try though. And a lot of the low tech, low quality tools can produce some fun results if you embrace the restrictions.

Some many in these comments are like “what about the ethical source data ones?”

Which ones? Name one.

None of the big ones are. Wtf is ethically sourced? E.g. Ebay wants to collect data for ai shit. My mom has an account, and she could opt out of them using her data but when I told her about it, she told me that she didn’t understand. And she moved on. She just didn’t understand what the fuck they are doing and why she might should care. But I guess it is “ethically” sourced as they kinda asked by making it opt out, I guess.

That surely is very ethical and you can not critic it for it… As we all know, an 50yo adult fucking a 14yo would also be totally cool as long as the 14yo doesn’t say no. Right? That is how our moral compass work. /S

Fucking disgusting. All of you tech bro complain about people not getting ai or tech in general and then talk about ethically sourced data. I spit on you.

I love IT, I work in it and I live it, but I have morals and you could too

Edit: after a bunch of messages telling me that I am wrong. I wonder when they will realize that they are making my point. I am saying that it isn’t ethically sourced without consent and uninformed consent isn’t consent. And they are tell me, an it professional with an interest in how machine learning functions ever since alphago and 7 years before the ai hype, that I don’t understand it. If I don’t understand it, what makes you believe the general public understands and can consent to it. If I am wrong about ai, I am wrong about ai but I am not wrong about the unethical nature of that data, people don’t understand it.

I don’t mean to “um achtually” you or diminish the point you’re making, but I would like to highlight one example of an ethnically trained AI.

Voice Swap pay artist to come in and record data for training, the artist then get royalties any time someone uses their voice. I discovered it through Benn Jordan’s video about poising music track from AI training.

Yeah, except royalties in music are almost always a joke. Those artists are going to make much less off their AI voice than if they actually appeared in studio and the end product is going to be worse. If AI cost the same or more, there would be no market for it. Relevant story about Hollywood actors who sold AI likenesses.

Even if it was actually “ethically trained”, the end result is still horrible.

Also, paying to have an AI Snoop Dogg in your song is the lamest shit I’ve ever heard.

Removed by mod

Someone saying whatever heinous shit they want using your voice seems a bit unethical, as does getting paid pennies for it.

Removed by mod

That AI was trained on absolute mountains of data that wasn’t ethically gained, though.

Just because an emerald ring is assembled by a local jeweler doesn’t mean the diamond didn’t come from slave labor in South Africa.

deleted by creator

Mozilla’s Common Voice seems pretty cool, but I’m not sure if that counts.

It’s fun to record the clips.

deleted by creator

Which ones? Name one.

What’s wrong with what Pleias or AllenAI are doing? Those are using only data on the public domain or suitably licensed, and are not burning tons of watts on the process. They release everything as open source. For real. Public everything. Not the shit that Meta is doing, or the weights-only DeepSeek.

It’s incredible seeing this shit over and over, specially in a place like Lemmy, where the people are supposed to be thinking outside the box, and being used to stuff which is less mainstream, like Linux, or, well, the fucking fediverse.

Imagine people saying “yeah, fuck operating systems and software” because their only experience has been Microsoft Windows. Yes, those companies/NGOs are not making the rounds on the news much, but they exist, the same way that Linux existed 20 years ago, and it was our daily driver.

Do I hate OpenAI? Heck, yeah, of course I do. And the other big companies that are doing horrible things with AI. But I don’t hate all in AI because I happen to not be an ignorant that sees only the 99% of it.

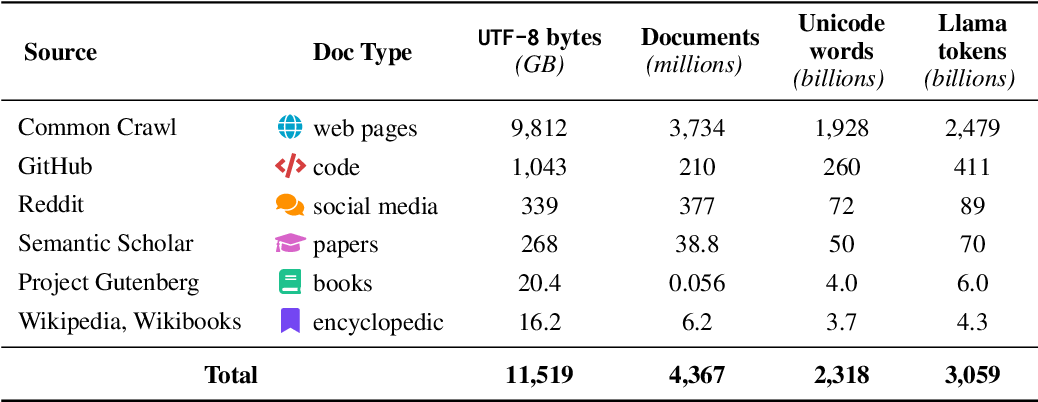

AllenAi has datasets based on

GitHub, reddit, Wikipedia and “web pages”.

I wouldn’t call any of them ethically sourced.

“Webpages” as it is vague as fuck and makes me question if they requested consent of the creators.

“Gutenberg project” is the funniest tho.

Writing GitHub, reddit and Wikipedia, tells be very clearly that they didn’t. They might asked the providers but that is not the creator. Whether or not the provider have a license for the data is irrelevant on a moral ground unless it was an opt-in for the creator. Also it has to be clearly communicated. Giving consent is not “not saying no”, it is a yes. Uninformed consent is not consent.

When someone post on Reddit in 2005 and forgot their password, they can’t delete their content from it. They didn’t post it with the knowledge that it will be used for ai training. They didn’t consent to it.

Gutenberg project… Dead author didn’t consent to their work being used to destroy a profession that they clearly loved.

So I bothered to check out 1 dataset of the names that you dropped and it was unethical. I don’t understand why people don’t get it.

What is wrong? That you think that they are ethical when the first dataset that I look at, already isn’t.

We generally had the reasonable rule that property ends at dead. Intellectual property extending beyond the grave is corporatist 21st century bullshit. In the past all writing got quickly into the public domain like it should. Depending on country within in at least 25 years of the publishing date to the authors dead. Project Gutenberg reflects the law and reasonable practice to allow writing to go into the public domain.

Good focus on 1 point, sadly bad point to focus on.

What is lawful and legal, is not what is moral.

The Holocaust was legal.

Try again. Let’s start. Should the invention of ai have an influence on how we treat data? Is there a difference between reproducing a work after the author’s death and using possible millennia of public domain data to destroy the economical validity of a profession? If there is, should public domain law consider that? Has the general public discuss these points and come to a consensus? Has that consensus been put in law?

No? Sounds like the law is not up to date to the tech. So not only is legal not Moral, legal isn’t up to date.

You understand the point of public domain, right? You understand that even if you were right (you aren’t), that it would resolve the other issues, right?

Yes. We should never have been idiotic with patents and other forms of gatekeeping information. Information is always free and all forms of controlling it is folly

Then don’t gatekeep e.g. your naked body and your loved one’s secrets! Information should always be fee and all forms of controlling it is folly! Do it. While you are at it, your, and your family’s, full name and place of employment please. Thanks!

Oh wait, you don’t want to do that right? Some information is private. You have some rights on some information. Ok then let’s talk about it.

Not what we are talking about. But you know that. Do you want to explain how to police public information without it being folly?

I don’t know where you got that image from. AllenAI has many models, and the ones I’m looking at are not using those datasets at all.

Anyway, your comments are quite telling.

First, you pasted an image without alternative text, which it’s harmful for accessibility (a topic in which this kind of models can help, BTW, and it’s one of the obvious no-brainer uses in which they help society).

Second, you think that you need consent for using works in the public domain. You are presenting the most dystopic view of copyright that I can think of.

Even with copyright in full force, there is fair use. I don’t need your consent to feed your comment into a text to speech model, an automated translator, a spam classifier, or one of the many models that exist and that serve a legitimate purpose. The very image that you posted has very likely been fed into a classifier to discard that it’s CSAM.

And third, the fact that you think that a simple deep learning model can do so much is, ironically, something that you share with the AI bros that think the shit that OpenAI is cooking will do so much. It won’t. The legitimate uses of this stuff, so far, are relevant, but quite less impactful than what you claimed. The “all you need is scale” people are scammers, and deserve all the hate and regulation, but you can’t get past those and see that the good stuff exists, and doesn’t get the press it deserves.

https://allenai.org/dolma then you scroll down to “read dolma paper” and then click on it. This sends you to this site. https://www.semanticscholar.org/paper/Dolma%3A-an-Open-Corpus-of-Three-Trillion-Tokens-for-Soldaini-Kinney/ad1bb59e3e18a0dd8503c3961d6074f162baf710

- Funny how you speak about e.g. text to speech ai when I am talking about LLM and image generation AIs. It is almost as if you didn’t want to critic my point.

- It is funny how you use legal terms like copyright when I talk about morality. It is almost as if I don’t say that you shouldn’t be legally allowed to work with public domain Material but that you shouldn’t call it ethical when it is not. It is also funny how you say it is fair use. I invite you to turn the whole of Harry Potter from text to Speech and publish it. It is fair use, isn’t it? You know that you wouldn’t be in the right there. But again, this isn’t a legal argument, it is moral one.

- Who said, that I think it could replace writers or painters in quality or skill, I said it could ruin the economical validity of the profession. That is a very very different claim.

I want to address your statement about my telling behavior. Sorry, you are right. I am sorry for the screen reader crowd. You all probably know that alt text could be misleading and that someone says that in the internet, isn’t a reliable source. So i hope you can forgive me as you did your own simple research into AllenAi anyway.

It’s incredible seeing this shit over and over, specially in a place like Lemmy, where the people are supposed to be thinking outside the box, and being used to stuff which is less mainstream, like Linux, or, well, the fucking fediverse.

Lemmy is just an opensource reddit, with all the pros and cons

It’s such a strange take, too. Like why do we have to include AI in our box if we fucking hate it?

What the fuck data collected could ebay use to train AI? The fact people buy star trek figurines??

You could train it to analyze sales tactics for different categories of items or even for specific items, then offer the AI’s conclusions as an ‘AI assistant’ locked behind a paywall.

Plenty of use cases for collecting e-commerce data.

To sell you more stuff, that is how Amazon got ahead of the competition.

Thanks for making my point. People don’t understand and therefore can’t consent and therefore it isn’t ethically sourced data.

Mate, supercilious comments like this also do not help. They make you look a raging boy crying wolf.

“Ah yes someone expressed incredility at the viability of the business practice in this instance. I must tell them they are the problem.”

I mean you had a chance to point out the issues in depth handed to you on a fuckin’ plate but instead you chose to jam your head up your own butt.

deleted by creator

Who is “they” in “they are the problem”?

Because they are if you mean the companies and their supporters

dear lord man, read the comment

Dear lord man, write more understandable comments.

Why is it an issue to tell the class who you think I am blaming? Would that ruin your point?

One ethical AI usage I’ve heard was a few artists who take an untrained bot and train it on only their own artwork

What’s an “untrained bot”? Did they code it from scratch themselves? I find it almost impossible to believe it wasn’t just a fork of an existing, unethical project but I’d love more detail

I remember they said they bought it and explained how they used it to increase their own productivity and how being trained on other artwork was a detriment because it wouldn’t generate in their style. Probably was a fork of an unethical project lol

Ethical small data: https://youtu.be/eDr6_cMtfdA

Removed by mod

Did you read the whole comment? You understand that I was sarcastic and I followed it by be hinting at the idea that “she didn’t say no” is not considered consent in e.g. sexual encounters, raising the question why would it be here?

So we agree. You just misunderstood my comment.

Removed by mod

Tfw a community is called fuck_ai so you decide to march in and defend honour of Sam Altman.

It’s the same with c/Linux and folks marching in and defending Windows to the death. Some people just like to be contrarian ¯\_(ツ)_/¯

Honestly, I didn’t intend to block a dozen AI Bros today, but this has been like shooting fish in a barrel.

Every now and then you gotta rattle the trees.

Damn, I had no idea the Game UI DB guy was so based. Huge respect from me.

Totally lost, can someone give me a tldr?

deleted by creator

deleted by creator

But if a tangent from the post, but you raise a valid point. Copying is not theft, I suppose piracy is a better term? Which on an individual level I am fully in support of, as are many authors, artists and content creators.

However, I think the differentiation occurs when pirated works are then directly profited off of — at least, that’s where I personally draw the distinction. Others may has their own red lines.

e.g. stealing a copy of a text book because you can’t otherwise afford is fine by me; but selling bootleg copies, or answer keys directly based off it wouldn’t be OK.

If your entire work is derived from other people’s works then I’m not okay with that. There’s a difference between influence and just plain reproduction. I also think the way we look at piracy from the consumer side and stealing from the creative side should be different. Downloading a port of a sega dreamcast game is not the same as taking someone else’s work and slapping your name on it.

I haven’t heard of this before, but it looks interesting for game devs.

Game UI Database: https://www.gameuidatabase.com/index.php

Me either. Seems like it would be a really handy site to use if you were making your own game and wanted to see some examples or best practices.

Had no idea it existed, I don’t make games though. But it’s always so cool to see something built that serves a unique niche I had never thought of before! Consulting used to be like for me but after a while, it was always the same kinds of business problems just a different flavor of organization.

Damn straight!

Removed by mod

This is what luddites destroying factories must have been like lmao

luddites destroying factories

Every time a techbro parrots the word ‘luddite’ I want to cause them physical harm.

No, it is not - there is empirical evidence that AI is accelerating climate change. Every AI model has been trained on stolen or unethically sourced data. Your strong desire to create something that you can make money off of is not a moral justification for using AI.

deleted by creator

I didn’t, I very rarely report people.

deleted by creator

Everything is accelerating climate change. I don’t see how your wish to hurt people you don’t know (kinda weird btw?) has anything to do with it.

Gettem’ Jax

Weird considering at least 3 other people did see the point.

If I’m a luddite you’re a troglodyte.

OMG you pwned me with Internet points

Points mean nothing on this platform, I’d do my best to start deprogramming the redditor out of you.

Yeah they made sense and had a point, but clowns with bad takes won anyway cuz ‘muh capitalism’ and ratfucked the human race while their cheerleaders hooted like chimps on meth

Read a book, Slappy

The general rule was it had to be 25 percent different. This is why AI cant directly copy an image. You may remember some horrendous boundary pushing of art in the 2000s like that artist who straight up blew up celebrity and media influencers instagram posts and sold massive photos of it directly without giving the influencer/celebrity a cent. Avril Lavinge’s ex published her song lyric notes and won the case against her. Copyright has always been awful. The Marvin Gaye estate is notorious for bluffing that his IP is stolen, but music can legally sample 6 seconds of any song or sound without permission. Robin Thickes song was completely different and when that family is hard up they go after another obscure artist. Dont be swayed. If its original its original. Not like Selena Photos Y Recueredos and Back on the Chain Gang by the Pretenders, that one was blatant. And all she did was change the lyrics back in the 80s. Copyright changes, but you are protected just like the big guys. Don’t be afraid to create, you’ll be missing out on experience. Copy dont wory about originality just make art. Trust me I couldnt paint more than a stroke for years because of fear of being a copycat and infringing and unoriginal. Just copy copy until you have your own style. I promise it will come. Its impossible for two people to play the moonlight sonata exactly the same. I was friends with an Oxford music professor. He can tell anyone by the way they play a piano. The nuances are always going to show. You’re too original, you’re not a robot. Even 3d printers never print the same piece the same because of environmental factors.

All you need to know is change your art 25 percent from the original. Even if it is color choice, and anything you publish online is automatically protected in American courts. It doesn’t matter if you copy AI. If its 25 percent different its yours. Also I;ll remind you that AI legally cannot duplicate images to infringe on copyright. Thats why all images look slightly off. The nuances are set with parameters partially to keep it legal. If courts find it is copy beyond artistic expression, then in comes the hammers and bats to the ai server stacks. Serious.

Missing the point.

The ai companies used works of people without their permission to create the ai to then dump the prices of the labor to force the creators out of the business. The quality is worse but good enough for a lot of work. You can say “but that is how capitalism works” but you would be wrong, because they stole in step one.

music can legally sample 6 seconds of any song or sound without permission

GREAT article on copyrights and sampling. I learned a LOT, mostly that people are making all sorts of dumb rationalizations as to why their version of sampling is perfectly fine, when it’s not.

It’s pretty clear that suno.com is committing massive copyright fraud.